Hence it can be concluded that it is easier to download all images from a Webpage using Python rather than to do it manually. A tedious task is reduced to a small amount of work in just a few lines of code. Downloading all images from a website is an easy task using Web Scraping.

Headers= Failed to download')īeautifulSoup and requests modules can be considered the strongest weapons in a Web Scraper’s arsenal. The requests module and BeautifulSoup can be installed using pip in Command Shell: The content of these images will be extracted using BeautifulSoup and it would be written to an image file using File Handling in Python. Using the get() method, the source of the images will be stored in the list. images bookcontainer.findAll('img') example images0 example. Since we want image data, we’ll use the img tag with BeautifulSoup. The key idea of the video is to demonstrate how to use Python and the web grabber Chrome extension to scrape dynamically rendered HTML content from. BeautifulSoup will extract all the details from the tags. But if you don’t, using Google to find out which tags you need in order to scrape the data you want is pretty easy.

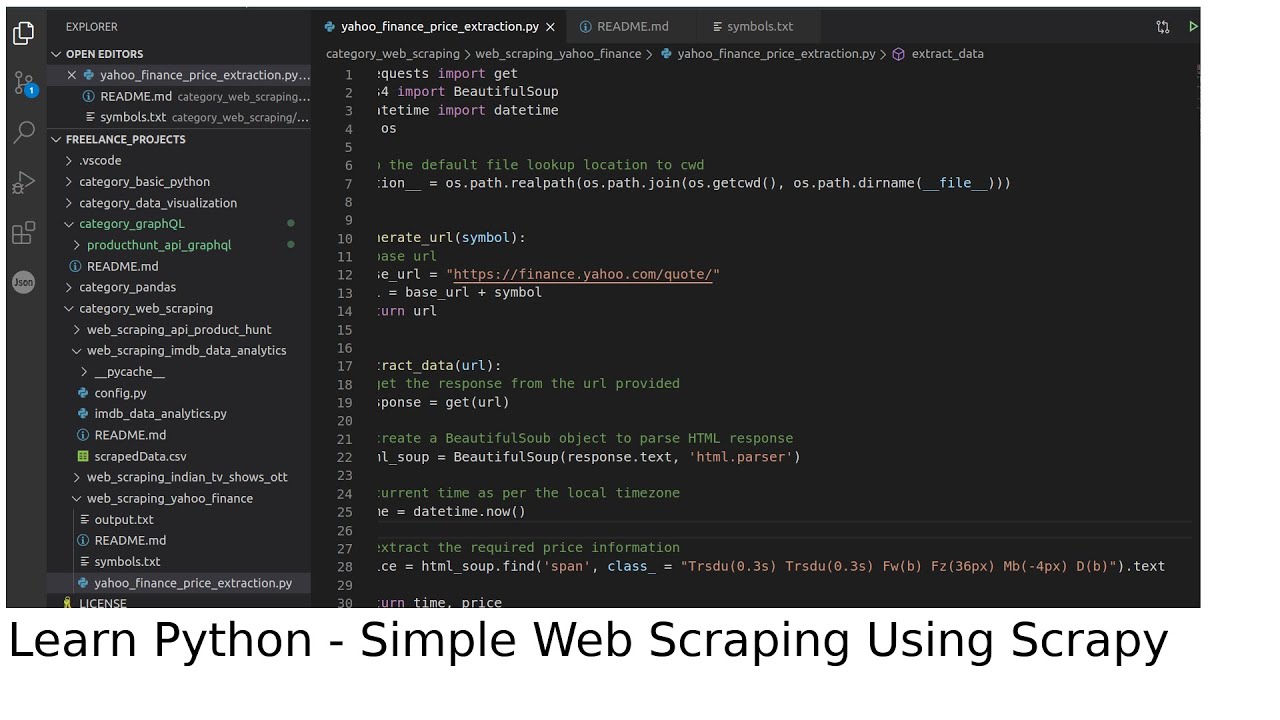

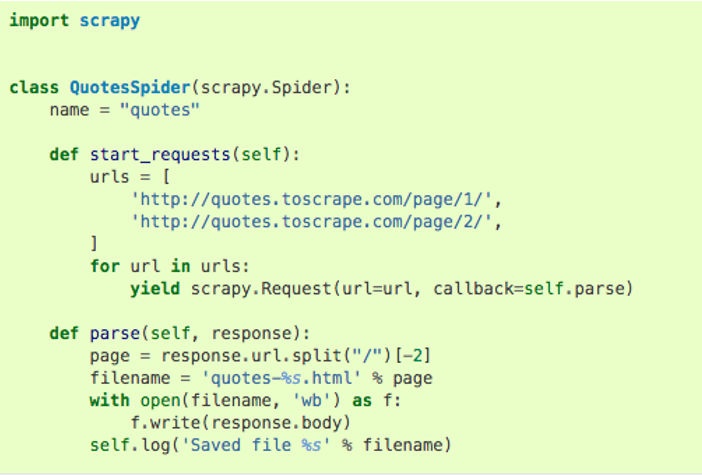

The code will first get the HTML page using the requests module. Here is a screenshot of the website: Screenshot Of Website To Be Used 2 The website has been selected because it contains a lot of images. The blog teaches users to develop a better SEO algorithm using images. Before proceeding further, we highly recommend reading our article titled 'Python Web Scraping Tutorial: Step-By-Step. The website to be used in the code is a blog on Yoast. The requests library can be used to get the HTML text of the webpage, also known as the source code. After getting the source URL, last step is download the image. os.mkdir (foldername) Iterate through all images and get the source URL of that image. BeautifulSoup is a well-known web scraping library that parses the HTML content of a webpage and gets all the content from the webpage based on HTML tags. Create separate folder for downloading images using mkdir method in os. The tools used in the code are BeautifulSoup and the requests library from Python. An image in a webpage is usually implemented using the tag of HTML. This is useful information as it will help in establishing the code. Creating a short Python script makes the work easier. Overall, our entire spider file consisted of less than 44 lines of code which really demonstrates the power and abstraction behind the Scrapy libray. To accomplish this task, we utilized Scrapy, a fast and powerful web scraping framework. 18:33:26 (205 MB/s) - '1490571194319s.jpg' saved ĭownloaded: 66 files, 412K in 0.2s (2.Downloading all the images from a webpage individually can be a headache and time-consuming progress. In this blog post we learned how to use Python scrape all cover images of Time magazine. Youll then write each image file into a folder to download the images. Youll then pass the response from that website into BeautifulSoup to grab all image link addresses from imgtags. You'll see downloaded media as they come down: For this image scraping tutorial, youll use the requests library to fetch a web page containing the target images.

from a CDN or other subdomain) to the directory from which the command is run from. The script above downloads images across hosts (i.e. Wget -nd -H -p -A jpg,jpeg,png,gif -e robots=off To scrape images (or any specific file extensions) from command line, you can use wget: All of these solutions are nice but I wanted to know how I could accomplish this task from command line. I then moved on to browser extensions for this task, then started using a PhearJS Node.js JavaScript utility to scrape images. Twenty years ago I would accomplish this task with a python script I downloaded. The desire to download all images or video on the page has been around since the beginning of the internet.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed